Hello!

I’m 1/2 year into GA now, amazed, overwhelmed, confused (GA, PGA, CGA?). GA highly inspires me, and besides Infinitesimals/Hyperreal Numbers/Surreal Numbers, it is one of the things in Math that I always missed in school and university, that just felt like it had to be there, but noone could tell or point to; and that I finally found.

I could think of a gazillion problems that seem to be easy with or lending themselves to GA, but as a starter I want to tackle a single problem to learn to do “real” problems with GA.

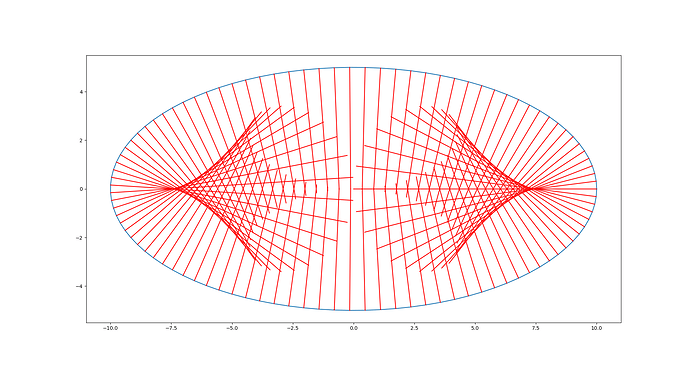

I wonder how one would model the reflection of lines going through a point (“source”) at a parabola.

First I would like to solve the the obvious case: Source at focus of the parabola.

Then I’d like to look into other source points, going on with other curves that act as reflector. Then I’d like to look into the “density” of lines, and even something like incidence angle (at an intersection), occlusion (Heaviside function), variation of parameters, 3D case…

Uses could be modeling a lamp like a Poul Henningsen PH 5, or a Wolter telescope.

Things where I am stuck/unsure and need help:

- Which GA is best suited (for the 2D case, later maybe 3D)

- How do I describe a curve or function in GA? Parabola, standard form, rotation?

- How do reflect at a curve? Would of course need the tangent at the point of reflection…

- The source is a pencil of lines, which has a known representation in some GA’s, and if it is at the focus of the parabola, the outcome has to be a pencil of lines though some point at infinity (parallel) lines. But I’m not sure if the density is uniform, and how to analyse that. Of course in a more general case the outcome is more complicated.

- How do I analyse/model different densities of pencils?

Any pointers to papers, videos, presentation, books etc. are highly welcome, along of course explanations of actual steps.

I find tons of theoretic material regarding basics and properties of GA, but miss the actual practical examples.

. And connected to this: Is there a nice formulation for quadrics, like its matrix formulation

. And connected to this: Is there a nice formulation for quadrics, like its matrix formulation  . I also did not find the button to edit my comment.

. I also did not find the button to edit my comment. ).

).

. If

. If