I’ve been doing property testing on my haskell projective geometric algebra library. this tests my engine, and finds cases where properties are not true.

I’ve been writing this problem up, and asking for opinions in side channels, but thought i might ask here: has anyone done any work on numeric stability with a PGA system, using floating point math, just generally?

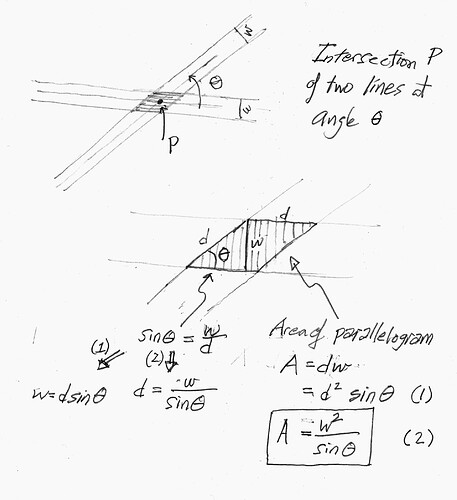

At the moment, I am trying to determine how big a circle i have to draw around the intersection of two lines, to guarantee that the real answer is somewhere in the circle.

I’m going to paste my current writeup below, but i would accept any answers people have to give about error drift with a 2D PGA system implemented in doubles. I’ve done a lot of work in this area, but think i’ve got the issue cornered in my program enough to start requestion outside help. I’m using ULP tracking at every operation, and trying to create a “what is the maximum amount off this value is” function, so i can reason about error, rather than ignore it.

Thanks everyone.

Julia Longtin

----- SNIP -----

Problem description:

We use floating point calculations. When we intersect two lines, the point that is returned is not exactly where it would be in a non-floating point world.

To try and resolve this, we’ve done ULP tracking of all of the input calculations, and have a function that transforms a loss value (“what was lost at each calculation”) to an estimated error (“what is the maximum distance from reality for this result”). This lets us define a circle around our calculated point, and guarantee the real point is within that circle.

Ideally, when we intersect two lines, we should get a point, and an error radius defining a circle around the point, such that the real, ideal world point would be guaranteed to be within said circle. With the current code, that error calculation gives the wrong value and the circle ends up too small.

We have verified that we correctly preserve all of the loss values at each calculation step, but the transformation of that loss into an error radius is incorrect. bonus: the amount of imprecision goes up as really steep angles (near 180 degrees) get involved.

What we need is a modification of the error calculation function so it correctly calculates the error circle. We can verify that this was done by making sure the tests described below all pass.

The Branch:

(yes, this is badly named, with a bad commit history)

The test:

./dist-newstyle/build/x86_64-linux/ghc-9.0.2/hslice-0.0.1/t/test-hslice/build/test-hslice/test-hslice -a 10000 --match "/Contour facetization algorithms/Arcs (Skeleton/Concave)/finds that all motorcycles intersect at the same point in a triangle/"

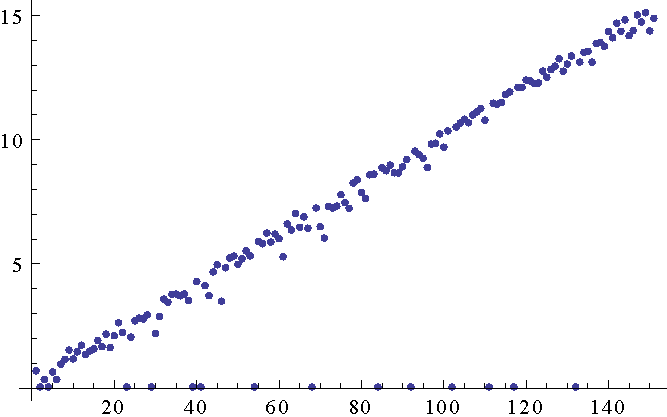

This test generates random triangles. It takes the bisector of each angle of the triangle, and then calculates the points and error circles for each pair of bisector intersections. Ideally, those intersections should be the same point. Due to floating point error, they are not, but the distance between them should be less than or equal to the sum of their error distances. However, the test will sometimes find triangles where this is not the case.

When the test fails, it dumps a section of code that you can drop into the online Projective Geometric Algebra viewer, complete with some of the associated haskell structures in comments. for example:

https://enkimute.github.io/ganja.js/examples/coffeeshop.html#bf5qID6Vx

the variables d, e, and f, contain the three points that are found. After their declarations, we have dumped the error-component of all of the operations, AND the angle (as SIN). note that all of the error cases contain one intersection with an angle that is -0.99999… so, “really steep”, and the - means “toward each other”. The point corresponding to this really steep intersection is quite far from the other two points, and it’s error circle has no space in common with the error circles of the other two intersections points.

The Test’s code:

The test is at:

HSlice/PGA.hs at fix_distanceBetweenNPLine2sWithErr · Haskell-Things/HSlice · GitHub

… but i don’t think that’s very useful. the actual point-distance-checking function is

HSlice/Intersections.hs at fix_distanceBetweenNPLine2sWithErr · Haskell-Things/HSlice · GitHub

to make this almost never fail, i have added a fudge factor to HSlice/PGA.hs at fix_distanceBetweenNPLine2sWithErr · Haskell-Things/HSlice · GitHub

The pPointFuzziness function is responsible for looking at all of the error when a point was created, and determining the maximum imprecision, or how far to draw a circle around this point, and guarantee that the real answer is within that circle. The 10000 in line 276 is completely made up, and does a really good job of solving this for a LOT of cases. Obviously, this should be replaced with a function. All of the potential inputs to that potential function are available inside of pPointFuzziness.

using a ‘1’ in place of the ‘10000’ results in a failing case within approximately random 250 tests. 10,000 gets us a few tens of thousands of tests before it can generate a skinny enough triangle to trigger the bug.